March 2, 2026

Every problem is a design problem. This one is public safety.

The role voters must play in regulating the government's use of AI

A war over the U.S. government’s use of AI played out in an actual war in the Middle East.

On Friday, the Pentagon abruptly blacklisted Anthropic, the maker of the popular Claude LLM, after the company refused to lift key guardrails on the military’s use of the model for potential autonomous weaponry and widespread mass surveillance.

In short order, rival OpenAI inked a deal with the government, replacing Anthropic, and positioning itself as both safety-oriented and a leader in the field. “In all of our interactions, the DoW displayed a deep respect for safety and a desire to partner to achieve the best possible outcome,” CEO Sam Altman, OpenAI’s chief executive, said on X.

The safety problem, say experts, is not just the models themselves, but the ability to profile real people with information that LLMs can analyze — chatbot conversations, Google searches, GPS locations, credit card and other transactions, you know, everything. There is a clear dotted line from an untrustworthy government to scenarios in which it could launch weapons without human oversight or use the technology to spy and threaten Americans for capricious reasons.

“The Pentagon had kept trying to leave itself little escape hatches in the agreements that it proposed to Anthropic. It would pledge not to use Anthropic’s AI for mass domestic surveillance or for fully autonomous killing machines, but then qualify those pledges with loophole-y phrases like as appropriate—suggesting that the terms were subject to change, based on the administration’s interpretation of a given situation,” says Ross Andersen in the Atlantic.

And while Anthropic temporarily enjoyed a moment of popularity, briefly becoming number one in U.S. app downloads, the celebration is premature, says AI researcher and critic Timnit Gebru. “OpenAI & Anthropic were advertised as ‘AI safety’ companies to stop fictional super intelligent machines from rendering us extinct,” she posted on X. “And they do that by partnering with the military and Palantir, organizations whose jobs are to kill people? Don’t fall for the theatrics guys.”

She is not alone. “Ironically Anthropic’s description of the deal: ‘New language framed as compromise was paired with legalese that would allow those safeguards to be disregarded at will.’ Is also how I would describe Anthropic’s safety approach and recent dropping of key principles,” posted Chief AI Scientist Heidy Khlaaf, PhD, a safety expert and Chief AI Scientist with the AI Now Institute.

Losing a $200 million Pentagon contract is an immediate blow to Anthropic; the long-term impact could be far worse. President Trump made the threat quite clear:

“The Leftwing nut jobs at Anthropic have made a DISASTROUS MISTAKE trying to STRONG-ARM the Department of War, and force them to obey their Terms of Service instead of our Constitution,” he wrote in a post on Truth Social. “Therefore, I am directing EVERY Federal Agency in the United States Government to IMMEDIATELY CEASE all use of Anthropic’s technology. We don’t need it, we don’t want it, and will not do business with them again!” He announced a six-month phase out and the expectations for a new attitude. “Anthropic better get their act together, and be helpful during this phase out period, or I will use the Full Power of the Presidency to make them comply, with major civil and criminal consequences to follow.”

That said, the sides are now clearly drawn, and the potential for AI misuse by governments is an urgent problem, says Frank Kendall.

Kendall, who served as the secretary of the Air Force from 2021 to 2025, says Anthropic’s attempt to negotiate the terms of the contract, no matter how noble (or not), was doomed from the start. “The government cannot be expected to negotiate provisions like those Anthropic is demanding with every supplier. It would be a nightmare to administer and unenforceable,” he says.

Instead, he’s calling for Congressional regulation as the only way forward. “We regulate most of the products we buy, from automobiles to airplanes to appliances,” he says. “Congress needs to pass, as part of comprehensive A.I. regulation, restrictions on the most dangerous uses of these tools despite the Trump administration’s strong resistance to such limits.”

Logically, if you believe this, then informed civic engagement matters now more than ever. Frankly, I’ll take whatever we got— marches, meetings, red crochet hats, snarky fonts, fact-checking wizards — if it gets us to better candidates, meaningful checks and balances, and true representation.

For that, we need a full-throated defense of voting rights, voter safety, and access, and everyone needs to get on board. “Souls to the polls” have never been more important, when the souls of the world are at stake.

Ellen McGirt

Editor-in-Chief

LinkedIn

Instagram

Threads

Ellen@designobserver.com

P.S. Did someone who loves you send you this newsletter? Welcome! Subscribe here.

This edition of The Observatory was edited by Rachel Paese.

This is the web version of The Observatory, our (now weekly) dispatch from the editors and contributors at Design Observer. Want it in your inbox? Sign up here. While you’re at it, come say hi on YouTube, Reddit, or Bluesky — and don’t miss the latest gigs on our Job Board.

The big think

It’s time to opt-in to the AI conversation.

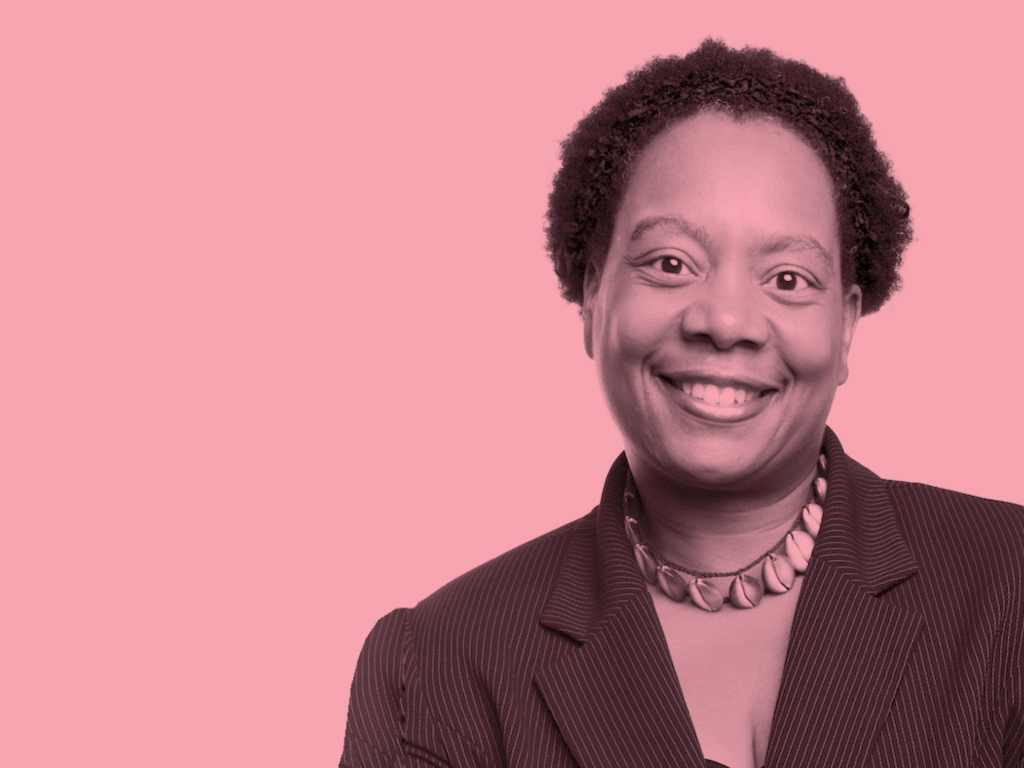

It isn’t lost on most observers that the most informed and skeptical voices about AI come from experts from communities often systemically underrepresented in technology circles, often women of color. Their voices are becoming instrumental guides, pointing out the potential promises and pitfalls of new technology while encouraging ordinary people to better understand it themselves.

Being excluded is a terrible thing. But self-exclusion is just as problematic.

I’m reminded of a conversation with Ovetta Sampson, then the director of user experience machine learning at Google. Sampson’s star turn on Season 11 of the DB|BD podcast delivered a clear message: it is up to ordinary people to hold big tech accountable.

“There are more ethical ways that we can make AI but if you don’t know how it’s made, you can’t be an activist for those alternative ways, right?” Sampson says. “And it’s really hard. There are definitely Joy [Buolamwini] and Timnit [Gebru] and some amazing other Black women in AI who are fighting this fight…But it’s really important that folks on the other end of these model outcomes join us. Join us in really asking tough questions about your data.”

Sampson shared her journey as an unlikely person in tech, which started from asking tough questions.

“I think there were a lot of obstacles and barriers to the path that I took to Google,” she says. “Just because of life circumstances, and that includes institutional racism, sexism, all those things.” She explained that growing up on the South Side of Chicago, her community was underresourced and her high school didn’t even have a computer science class. “So, I became a writer…which was really about activism and changing the narrative about my neighborhood.”

Sampson’s personal design motto is a beacon of its own: “to amplify the beauty of humanity with design while avoiding practices that exploit its fragility.”

Sounds like a good plan.

Activism in AI with Google’s Ovetta Sampson

Observed

Inside the Clawdbot phenomenon and the return of the Mac mini. In late 2024, Vienna-based software engineer Peter Steinberger released a new agent that seemed to immediately outperform both ChatGPT and Claude and eliminated the need for pricey subscriptions. “People wanted their AI assistants to actually assist them without becoming another subscription vampire or another company with access to their entire digital life.” Suddenly, it’s a thing.

Is design dead at Apple? “I was wrong about macOS 26 – its design is far worse than I first thought.” “The botched Apple Intelligence rollout has to be one of Apple’s most embarrassing debacles of the last decade.” Sure, but it comes in red! Hurry up and Think Different(er), Apple.

The Actor Awards are always a stylish show. The 2026 SAG Awards took place last night, with the theme “Reimagining Hollywood Glamour from the ’20s and ’30s.” Vogue has a round-up of the best looks.

Phil Gilbert also says it’s time for designers to lead. The man who helped transform IBM into a design-led innovation powerhouse says imagination will win the day. “We’re in a technical disruption cycle. AI requires integration, tooling, data protection — it’s expensive. Companies shift investment. But in the post-integration period, the value will come from people who imagine multiple futures, do divergent thinking, and understand users.”

America by Design, the initiative helmed by Joe Gebbia, the US government’s first-ever chief design officer, has already launched about ten new websites, mostly focused on Trump-branded stuff. The reports are mixed. (Unless you love golden eagles, golden scrolls, and accessibility issues.)

Job board

Senior Industrial Designer at Espiritu Studio, Salt Lake City, UT

Interior Designer at TruexCullins Architecture & Interior Design, Burlington, VT

Senior Industrial Designer at Zen Design Group, Auburn Hills, MI

End marks

Happy Women’s History Month!

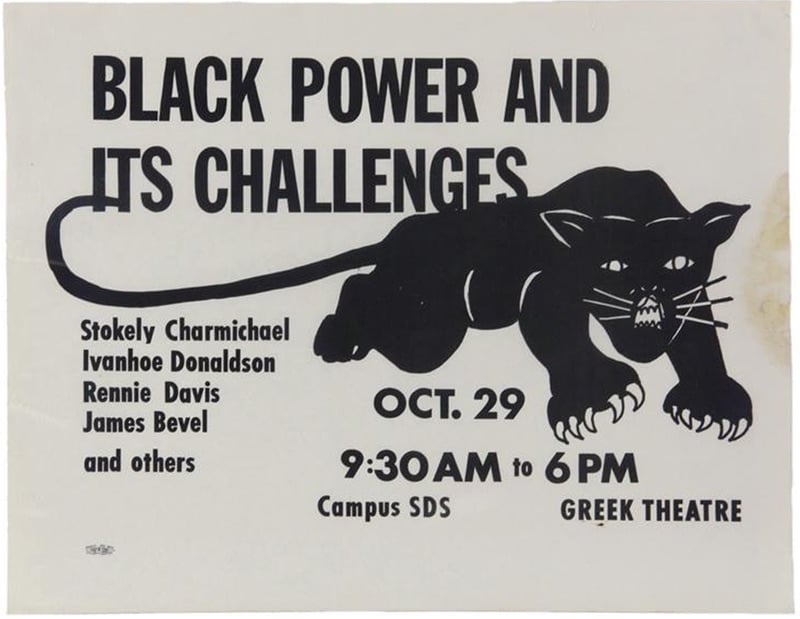

The Women Behind the Black Panther Party Logo

How three women shaped what became one of the most recognizable political graphics of the 20th century.

This is the web version of The Observatory, our (now weekly) dispatch from the editors and contributors at Design Observer. Want it in your inbox? Sign up here. While you’re at it, come say hi on YouTube, Reddit, or Bluesky — and don’t miss the latest gigs on our Job Board.

Observed

View all

Observed

By Ellen McGirt

Related Posts

The Observatory Newsletter

Ellen McGirt

The failed promise of big tech might be prompting a cultural revival

Arts + Culture

Matt Colangelo|Opinions

Landlines. #90s Tik Tok. Medievalcore. Strategists are proclaiming that 2026 is the year of nostalgia.

The Observatory Newsletter

Ellen McGirt

“The future does not need more speed; it needs more meaning”

Jessica Helfand|The Icarus Diaries

13: Fire

Related Posts

The Observatory Newsletter

Ellen McGirt

The failed promise of big tech might be prompting a cultural revival

Arts + Culture

Matt Colangelo|Opinions

Landlines. #90s Tik Tok. Medievalcore. Strategists are proclaiming that 2026 is the year of nostalgia.

The Observatory Newsletter

Ellen McGirt

“The future does not need more speed; it needs more meaning”

Jessica Helfand|The Icarus Diaries

Ellen McGirt is an author, podcaster, speaker, community builder, and award-winning business journalist. She is the editor-in-chief of Design Observer, a media company that has maintained the same clear vision for more than two decades: to expand the definition of design in service of a better world. Ellen established the inclusive leadership beat at Fortune in 2016 with raceAhead, an award-winning newsletter on race, culture, and business. The Fortune, Time, Money, and Fast Company alumna has published over twenty magazine cover stories throughout her twenty-year career, exploring the people and ideas changing business for good. Ask her about fly fishing if you get the chance.

Ellen McGirt is an author, podcaster, speaker, community builder, and award-winning business journalist. She is the editor-in-chief of Design Observer, a media company that has maintained the same clear vision for more than two decades: to expand the definition of design in service of a better world. Ellen established the inclusive leadership beat at Fortune in 2016 with raceAhead, an award-winning newsletter on race, culture, and business. The Fortune, Time, Money, and Fast Company alumna has published over twenty magazine cover stories throughout her twenty-year career, exploring the people and ideas changing business for good. Ask her about fly fishing if you get the chance.